Stay up to date with Reveal

Reveal business intelligence blog gives you the latest embedded analytics trends, how-tos, best practices, and product news.

Vibe Coding Analytics: Can You Really Build Instead of Buy?

Vibe coding analytics is changing how SaaS teams approach build vs buy decisions. AI makes it easy to generate dashboards, test ideas, and move fast early. But speed at the start does not translate to success in production. Customer-facing analytics requires governance, security, and cost control—areas where AI alone falls short. As AI raises expectations from dashboards to embedded intelligence, teams must decide to build and own the complexity, or adopt a platform designed for production analytics.

Continue reading...

SLM vs. LLM: Which AI Model is Right for Embedded Analytics?

Modern embedded analytics layers is shifting from static dashboards to AI-driven interaction inside Saas products. As teams embed conversational capabilities into their analytics, they must decide between small and large language models. The SLM vs. LLM choice affects latency, token costs, governance, and deployment flexibility. Small models often handle frequent analytics queries efficiently, while large models support deeper reasoning. Many organizations adopt hybrid architectures that combine both. Platforms like Reveal allow teams to add AI to their analytics layer without sacrificing cost predictability, governance, or deployment flexibility.

Continue reading...

AI Token Costs In Embedded Analytics: Why They’re Becoming a CIO Problem

AI token cost is now a line item in the CIO’s budget, especially for SaaS teams shipping AI-powered embedded analytics. Every natural language query, generated dashboard, and automated insight inside your embedded analytics layer burns tokens from large language models. Across a multi-tenant SaaS platform with thousands of users, that adds up fast. Controlling AI token consumption requires real governance: guardrails, model flexibility, and usage monitoring. Reveal built these controls into its AI-powered embedded analytics from day one, so your team can scale AI analytics without watching costs spiral.

Continue reading...

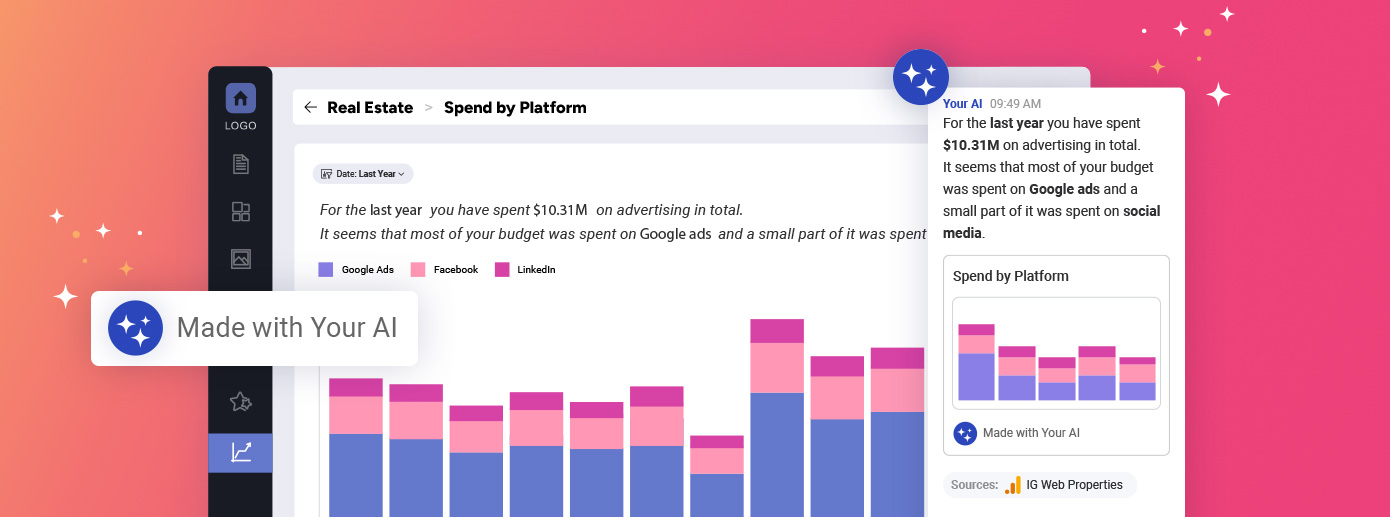

How to build AI-Generated Dashboards from User-defined Queries

AI-generated dashboards promise faster insight, but most implementations fail in real products. The issue is not model quality. It is architecture.

Production-ready AI-generated dashboards must operate inside the analytics lifecycle, not outside it. That means intent detection rather than query generation, metadata rather than SQL, and reuse rather than constant creation. When AI respects security, business language, and existing workflows, dashboards become durable product assets.

This approach shifts analytics from one-off answers to embedded decision support that scales across users, tenants, and use cases.

Continue reading...

Conversational Analytics in Embedded Analytics

Conversational analytics gives users a faster way to get insights by letting them ask direct questions instead of building reports. It reduces friction across the product and helps teams deliver clear answers without extra clicks or technical steps. The challenge appears when conversational analytics software relies on external AI services, which creates security and data-control risks. Reveal solves this with an architecture that keeps AI inside your environment and applies your existing rules to every request. You get a secure, flexible layer that supports natural-language queries without exposing your data.

Continue reading...